Research

My primary research interests lie in the fields of Machine Learning, Computer Vision, and Natural Language Processing.

Since 2020, I have been working on diverse topics in machine learning and computer vision. My research encompasses object detection and tracking, domain adaptation/generalization, 3D geometry, and scene understanding.

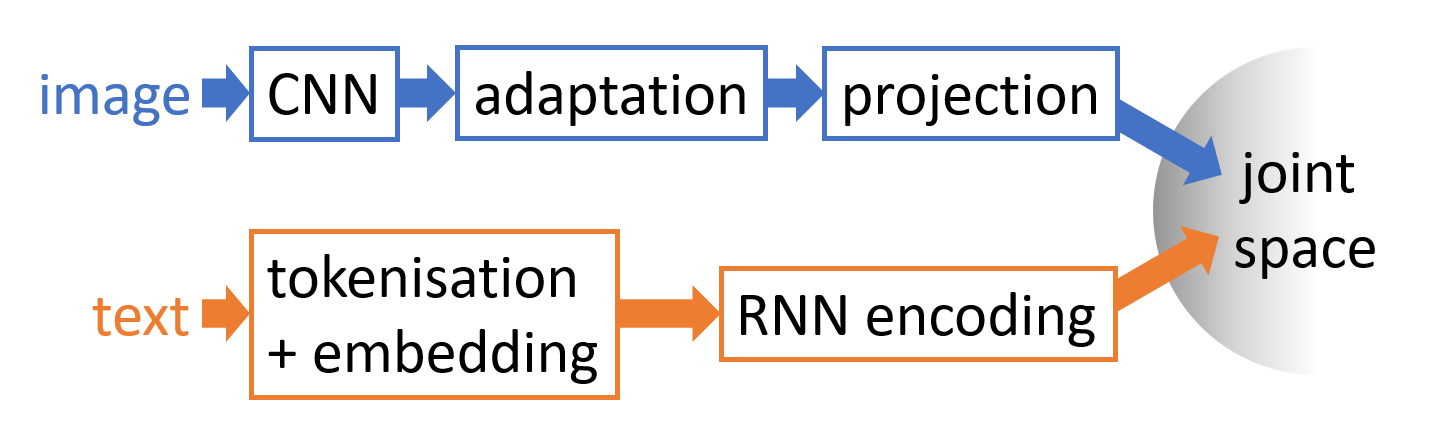

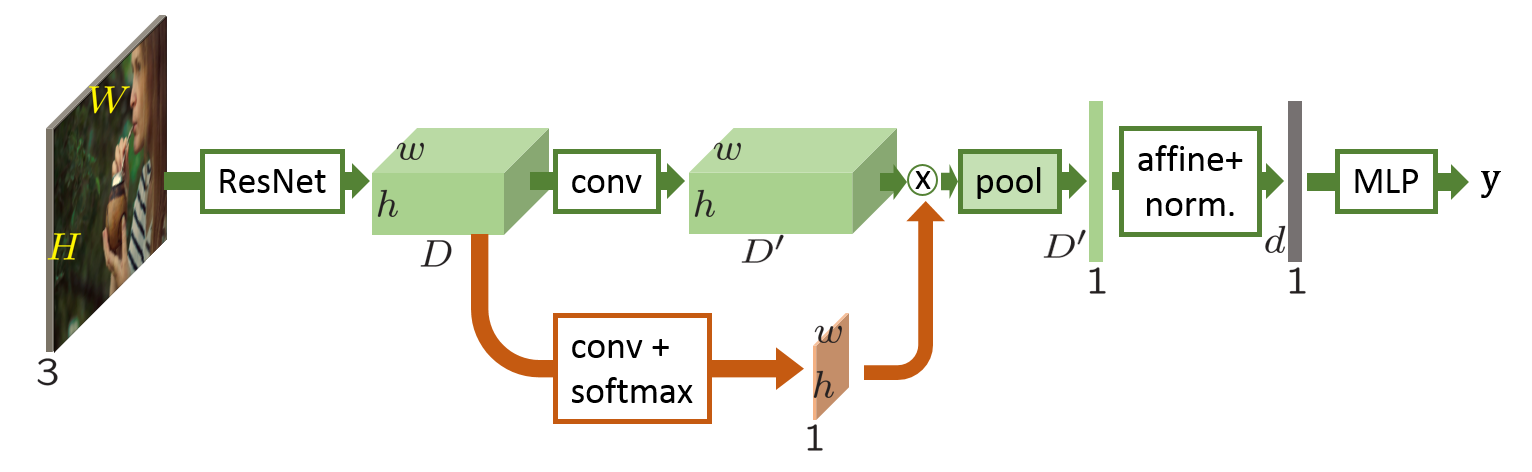

From 2017 to 2020, I completed my PhD thesis, which focused on joint visual and textual learning, with a specific emphasis on Visual-Semantic Embeddings (VSE). During this period, I was fortunate to be advised by Matthieu Cord, Patrick Pérez, and Louis Chevallier.

My doctoral research centered on multimodal representation learning, applying it to various tasks including cross-modal retrieval, phrase grounding, ranking optimization, known instance search, and iterative search.

Looking ahead, I am enthusiastic about tackling emerging research challenges. I have a particular interest in embodied AI, multimodal interaction, and 3D scene understanding, areas that I believe will play crucial roles in shaping the future of artificial intelligence and computer vision.

Projects

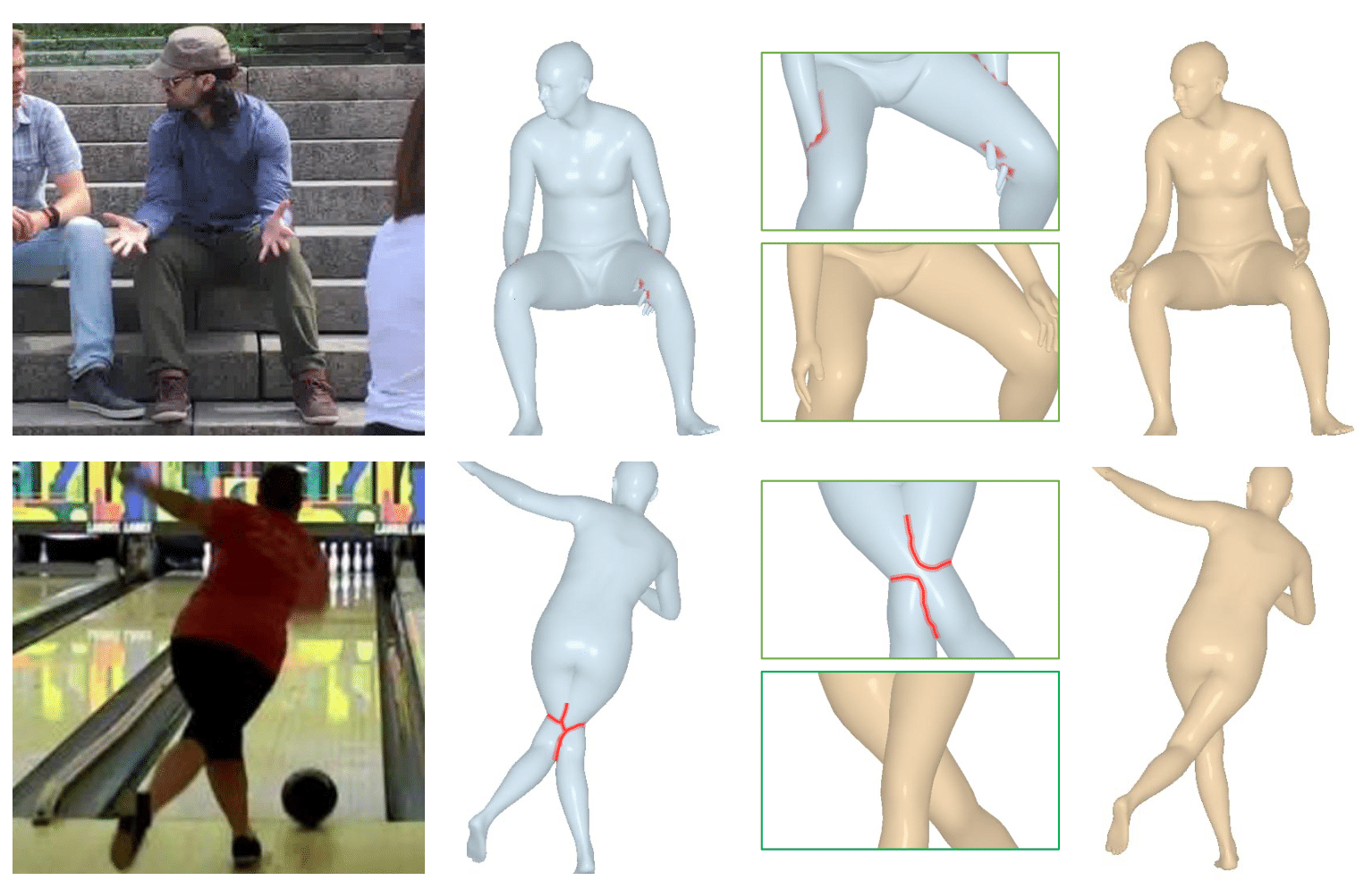

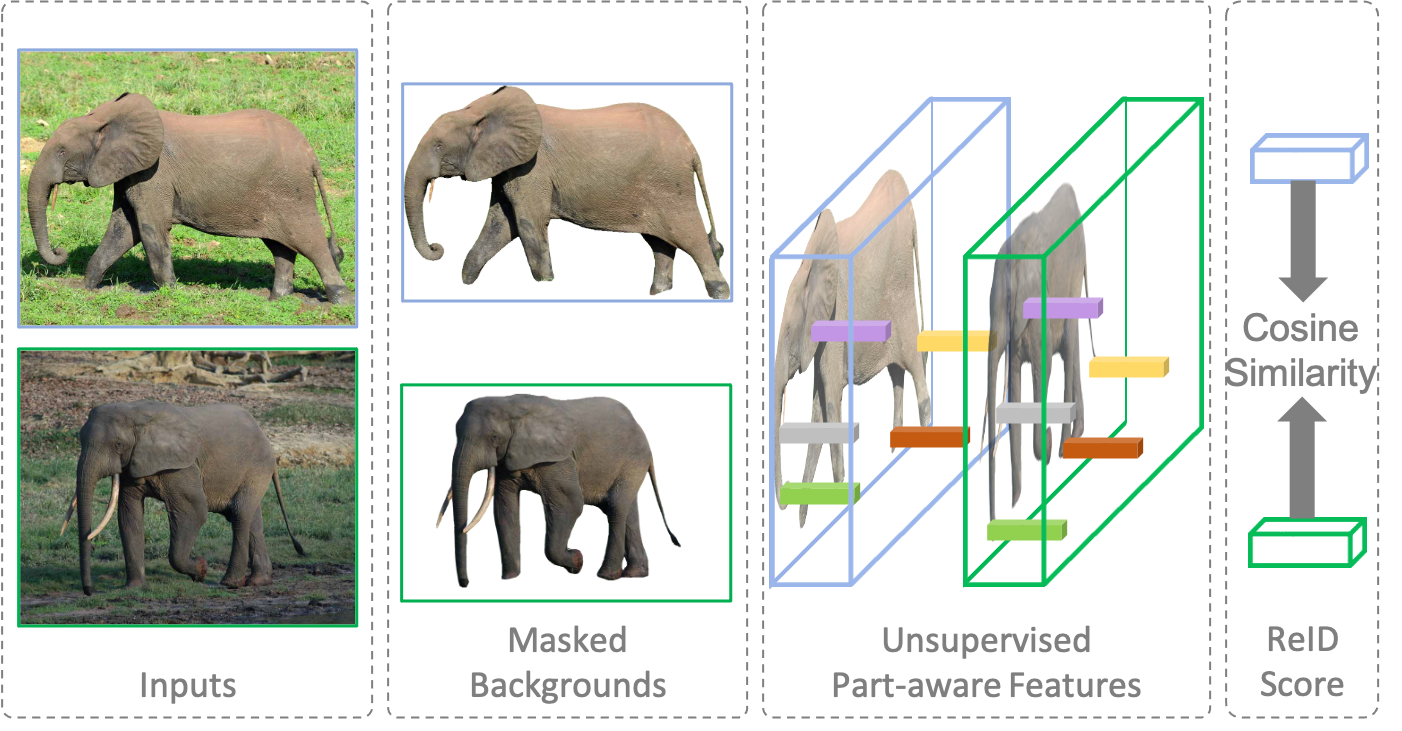

Addressing the Elephant in the Room: Robust Animal Re-Identification with Unsupervised Part-Based Feature Alignment

Y. Yu, Vidit, A. Davydov, M. Engilberge, P. Fua

CVPRW, 2024

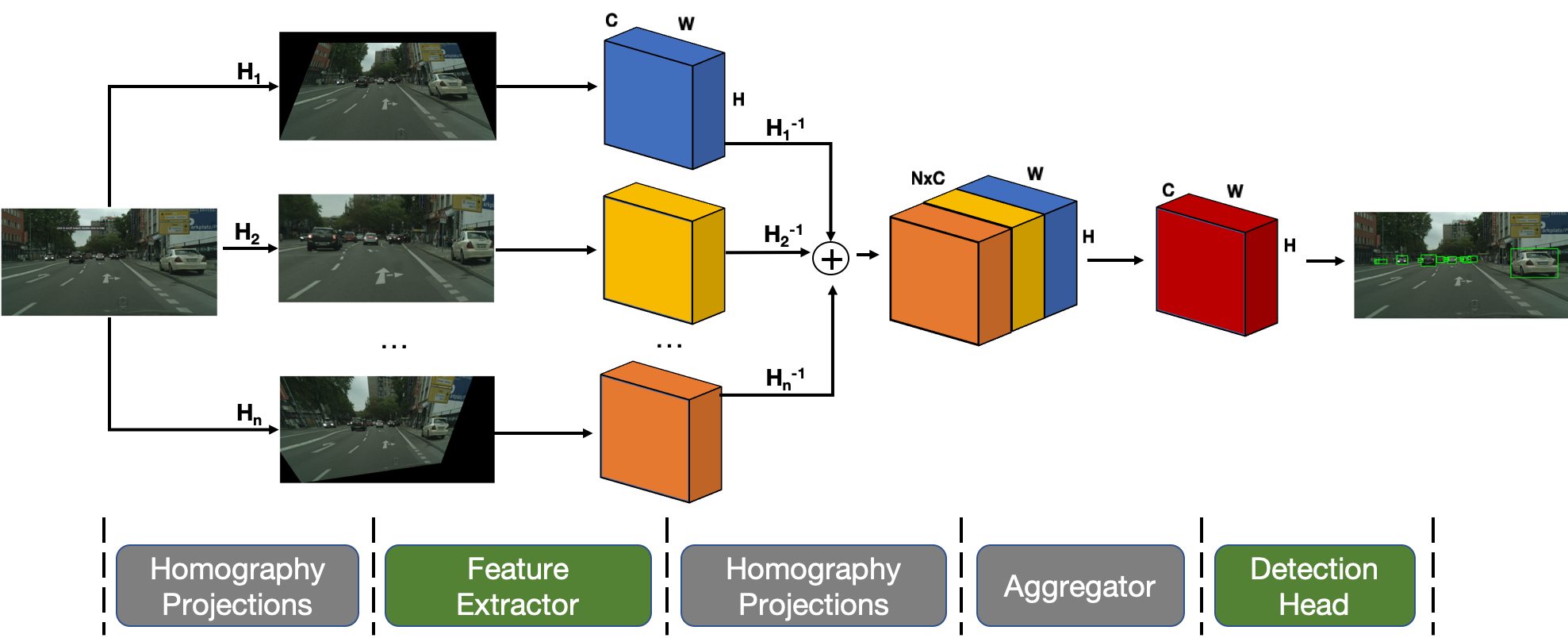

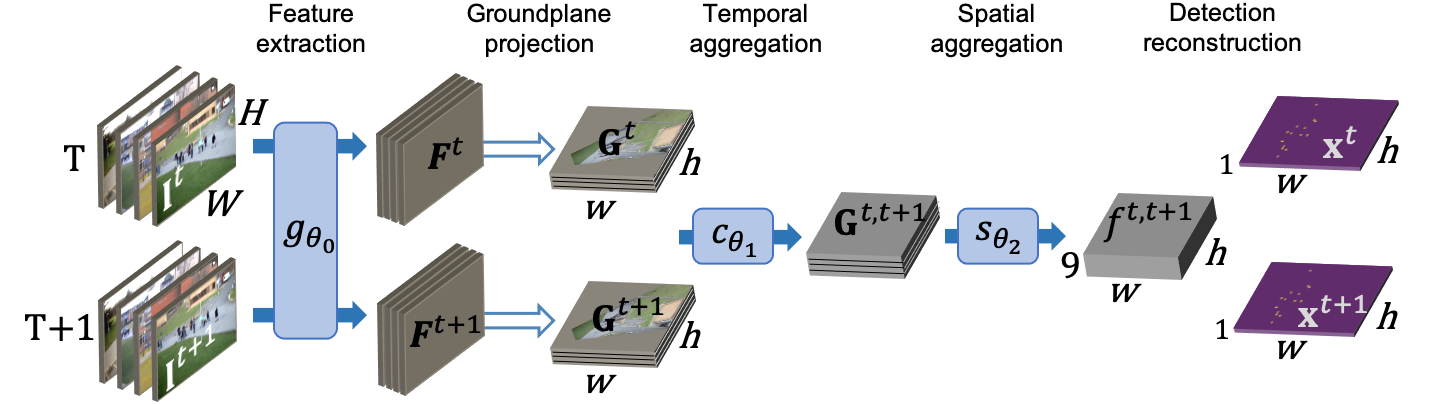

Multi-view Tracking Using Weakly Supervised Human Motion Prediction

M. Engilberge, W. Liu, P. Fua

WACV, 2023

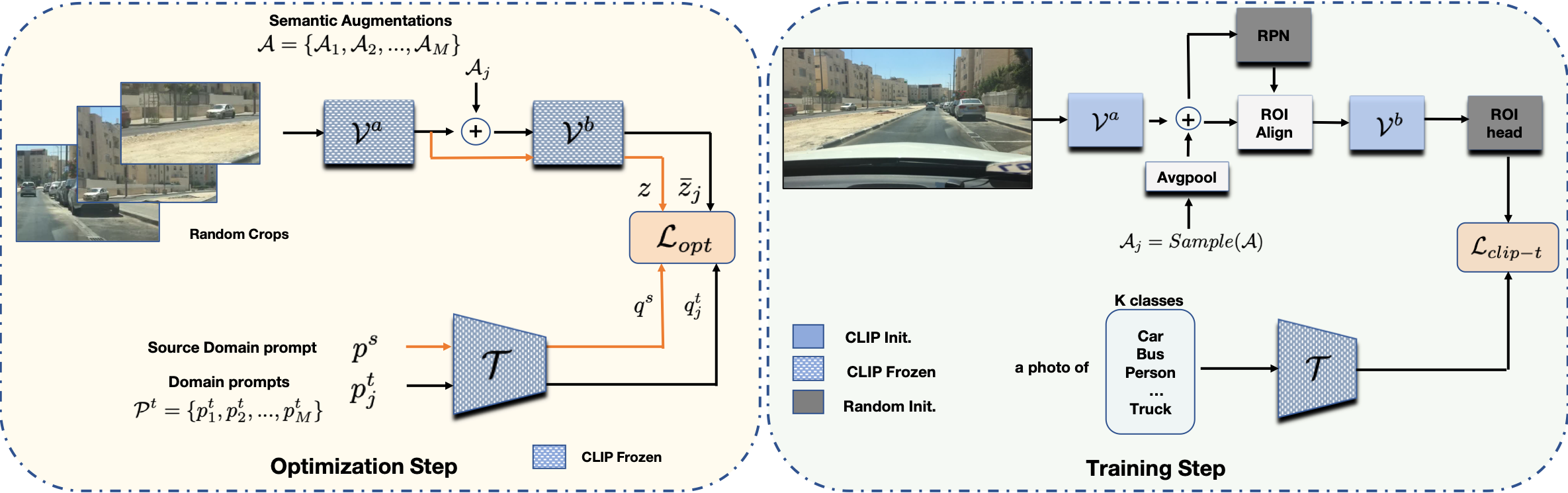

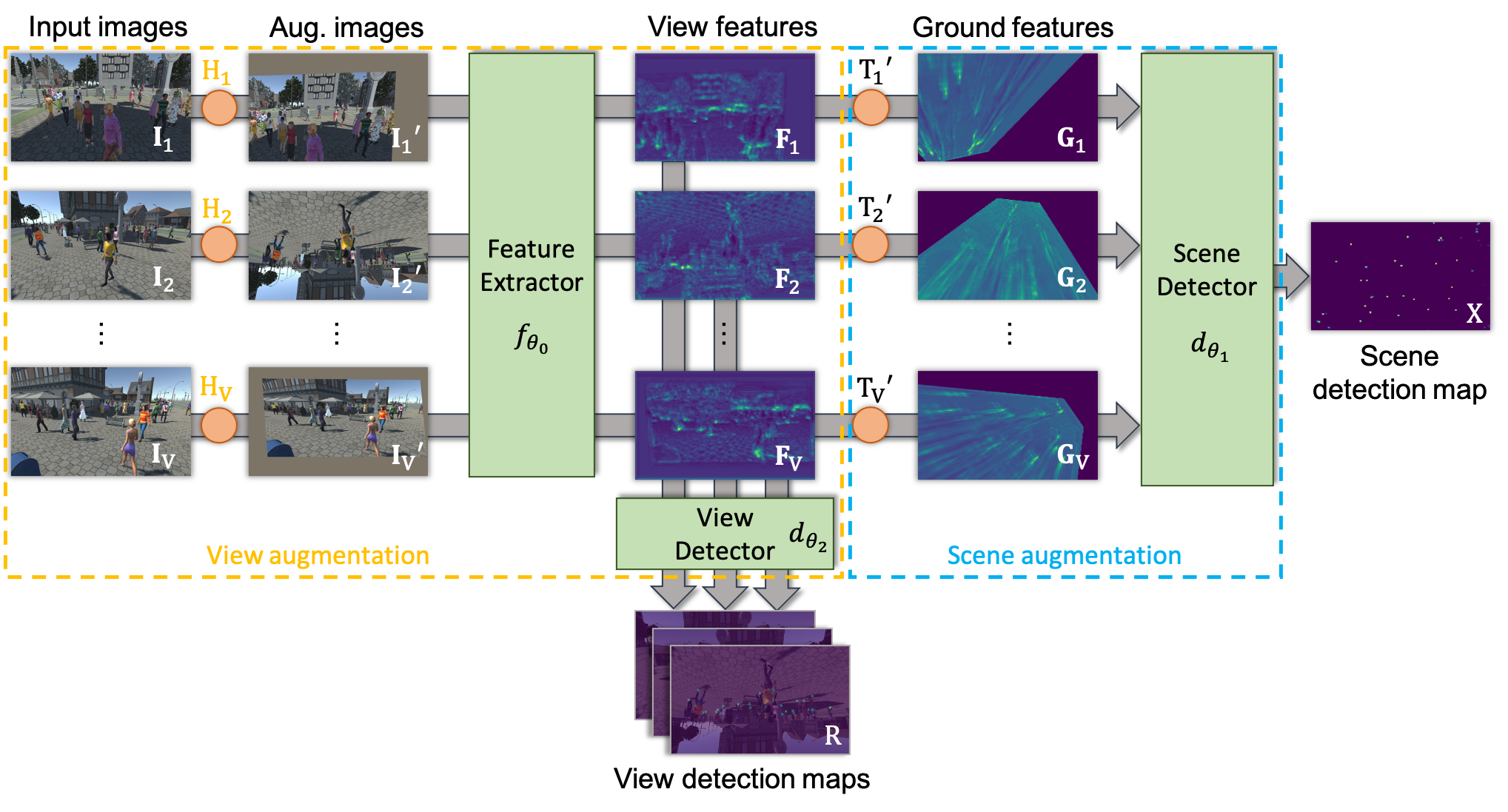

Two-level Data Augmentation for Calibrated Multi-view Detection

M. Engilberge, H. Shi, Z. Wang, P. Fua

WACV, 2023

VideoMem: Constructing, Analyzing, Predicting Short-Term and Long-Term Video Memorability

R. Cohendet, C. Demarty, N. Duong, M. Engilberge

ICCV, 2019

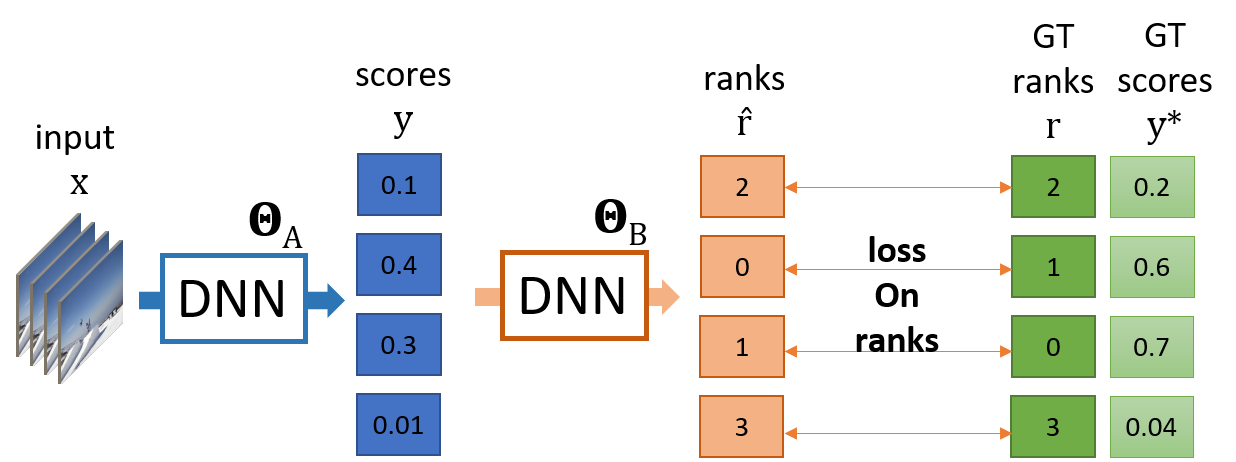

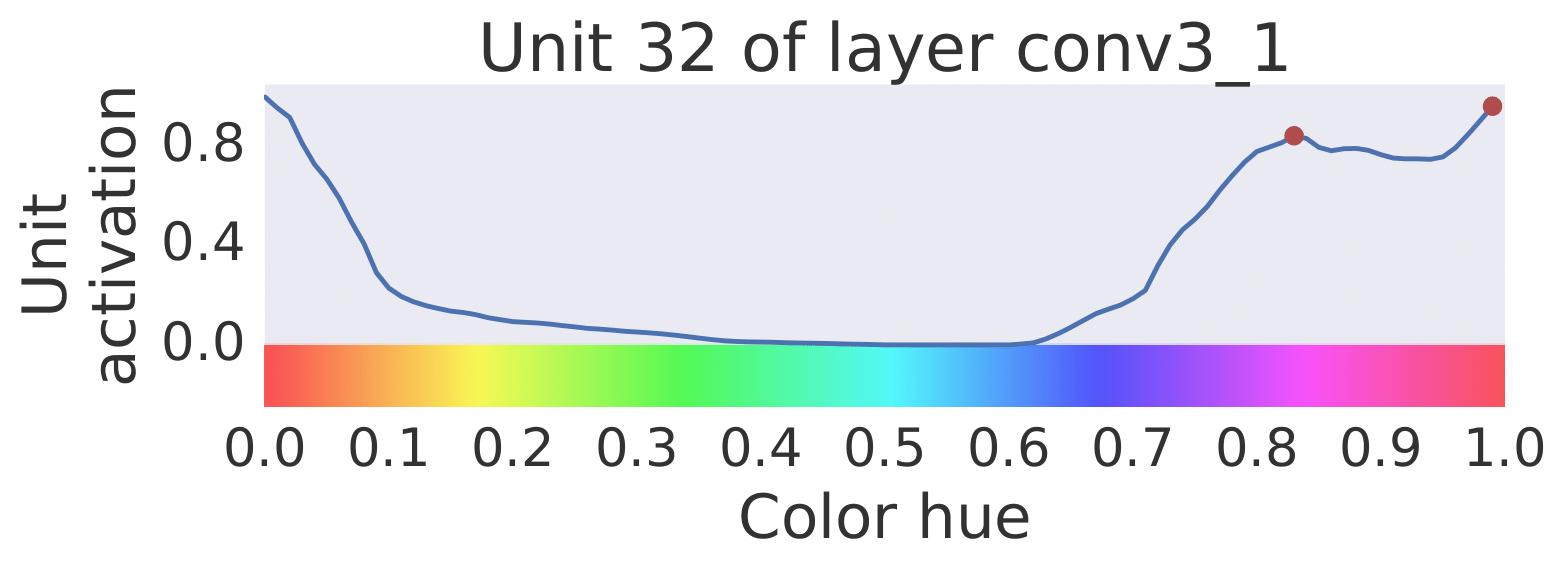

SoDeep: A Sorting Deep Net to Learn Ranking Loss Surrogates

M. Engilberge, L. Chevallier, P. Pérez, M. Cord

CVPR, 2019

Finding Beans in Burgers: Deep Semantic-Visual Embedding with Localization

M. Engilberge, L. Chevallier, P. Pérez, M. Cord

CVPR, 2018